Pyscan: Python Dependency Vulnerability Scanner with SBOM Support and Reachability Heuristics

| Pyscan v2.1.0: GitHub RepositoryThree years ago I shipped a Python vulnerability scanner written in Rust that took 6 minutes to scan 200 dependencies. Today, v2.1.0 scans 1000+ in 5 seconds and uses less memory than a browser tab. This is what changed.

The problem with vulnerability scanners

Pyscan was engineered to solve the performance and memory bottlenecks of traditional Python-based security tools in production CI/CD pipelines:

- Devs get rid of slower tools in favor of getting CI/CD done faster, indirectly leaving them vulnerable

- Memory constraints do not justify adding a separate tool for security.

What it does: Pyscan automatically traverses your Python project, extracts dependencies across various packaging formats (uv, poetry, filt, pdm, requirements.txt, SBOMs, even source code), and cross-references them against the Open Source Vulnerabilities (OSV) database

What's new in v2.10

Reachability Heuristics

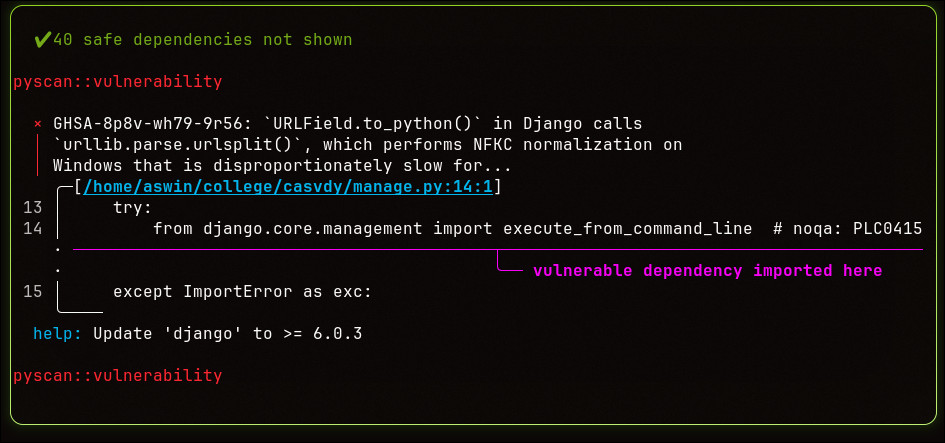

Pyscan now scans your source code to find where you're actually importing vulnerable packages and surfaces them directly in the diagnostic output.

Fair warning: this is regex-based heuristics, not a full call graph. It catches import-level usage, not deep transitive reachability. I'm not going to call it static analysis because it isn't. But it's genuinely useful for quick triage; knowing which file is pulling in the vulnerable package is half the remediation battle.

Full reachability analysis with DAG traversal is on the roadmap. This is the foundation.

SBOM Native Support

Pyscan now natively parses CycloneDX (bom.json) and SPDX (spdx.json) files. If an SBOM

is present, Pyscan treats it as the source of truth and skips everything else.

The dependency resolution precedence now looks like this:

- SBOMs (

bom.json,spdx.json) uv.lockrequirements.txtpyproject.toml- Raw source code (

.py)

If you're generating SBOMs in your pipeline already (and you should be) Pyscan will consume them directly.

Global Parallel Network Wave

This is the one I'm most proud of architecturally.

Previous versions handled OSV queries with parallelism, but inefficiently, since multiple packages shared the same vulnerability IDs, it ended up with redundant network requests hitting the OSV API. v2.1.0 refactors the fetching logic into a single deduplicated parallel request wave. One batch, no redundancy, significantly reduced latency.

This is also why the memory footprint stays flat. There's no accumulation of in-flight requests or buffered responses, I also removed some string allocation and learnt how to use bufreaders properly when parsing files.

The benchmarks

Tested against 88 dependencies. The full reproducible benchmark suite is in the repo.

| Tool | Execution Time | Peak Memory (RSS) |

|---|---|---|

| Pyscan | 6.9s | 53MB |

| Safety | 10.4s | 320MB |

| pip-audit | 62.2s | 433MB |

The memory number is the one worth staring at. pip-audit at 433MB in a memory-constrained CI environment will cost you a lot of money. Pyscan at 53MB is is kinda chill, relatively.

Runtime complexity is O(vulns), not O(deps). Scanning a clean project with 10,00 dependencies takes roughly the same time as scanning one with 15. The bottleneck is vulnerabilities found, not dependency count since OSV doesn't have a batched endpoint for detailed vulnerability information.

uv, you don't need Pyscan. uv audit is on-par and sometimes faster.

Pyscan is for everyone else.

What's next

The roadmap for upcoming releases:

- Better CI/CD QoL: structured output formats, exit codes, GitHub Actions integration

- Persistent security posture state: track your project's vulnerability history over time

- Proper DAG-based transitive dependency analysis

- Trying to close the gap with

uv auditon raw speed

Installation

via pipx (recommended)

pipx install pyscan-rsvia pip

pip install pyscan-rsvia cargo

cargo install pyscan40,000+ combined downloads across PyPI and crates.io. Featured on the Real Python Podcast. Still maintained by one broke college student between classes.

GitHub repo, PRs and issues warmly welcomed.